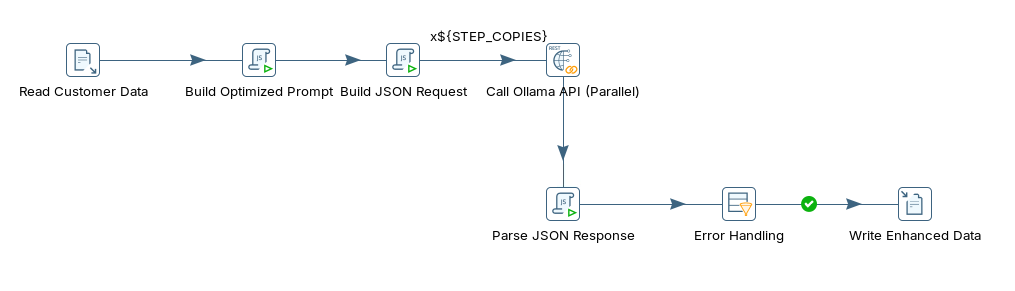

sentiment_analysis_optimized

sentiment_analysis_optimized

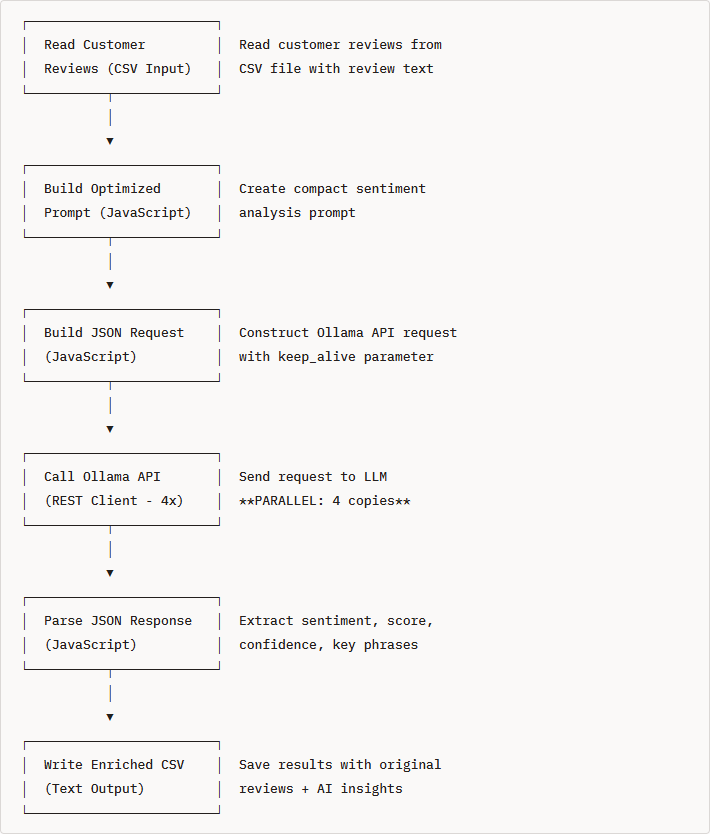

Response from sentiment analysis

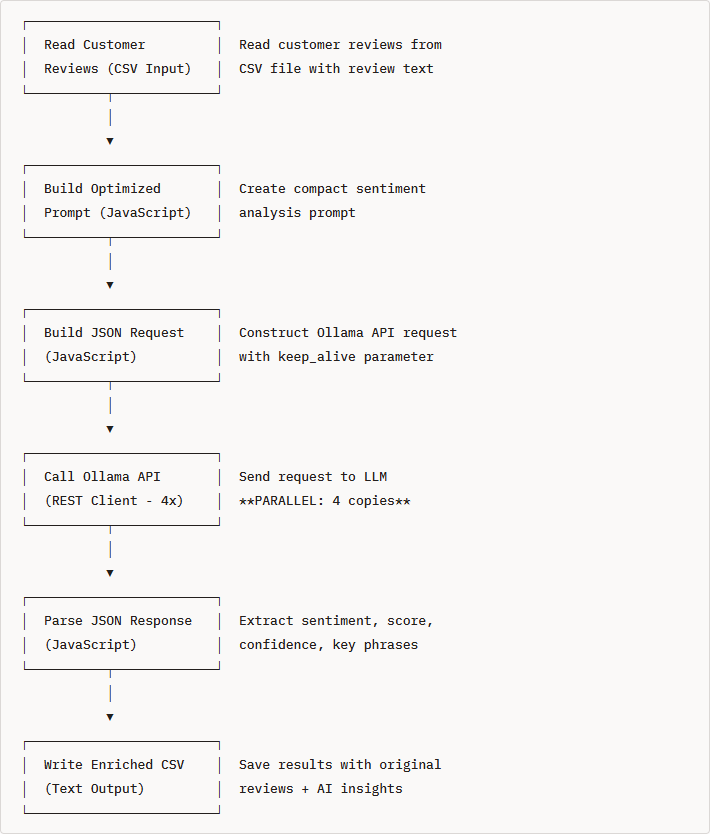

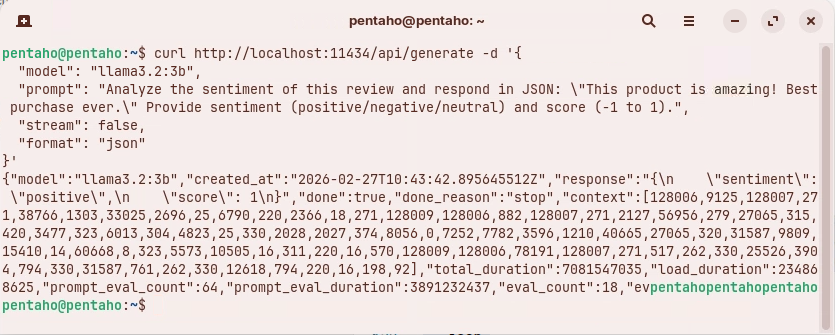

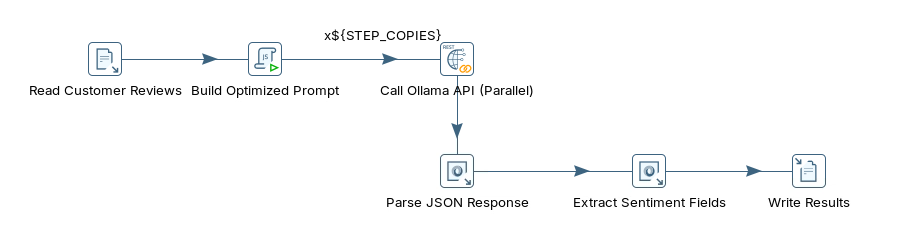

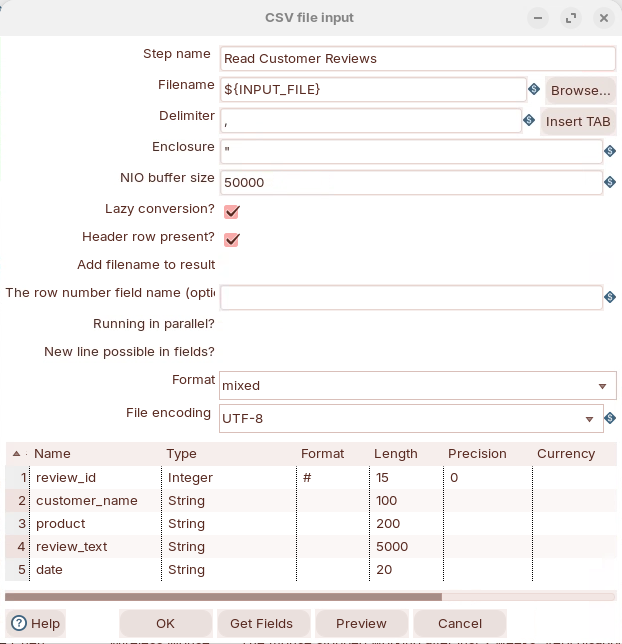

Sentiment Analysis

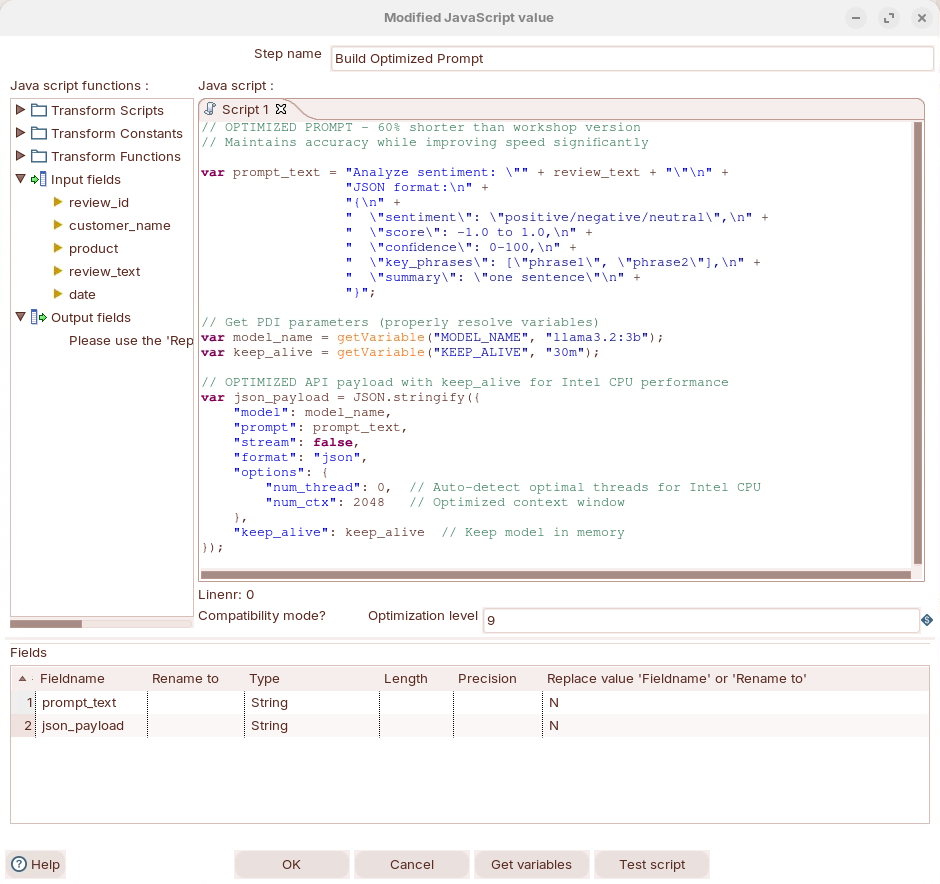

Build your prompt

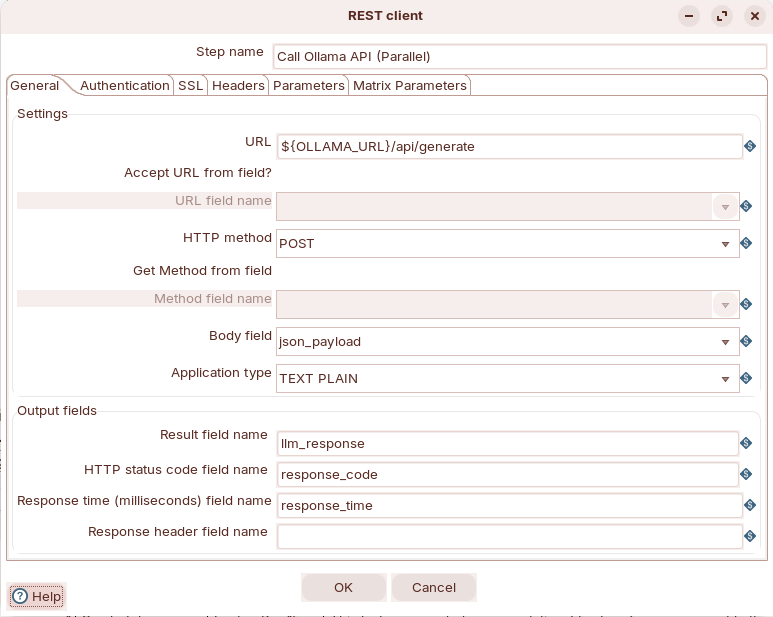

POST request to Ollama

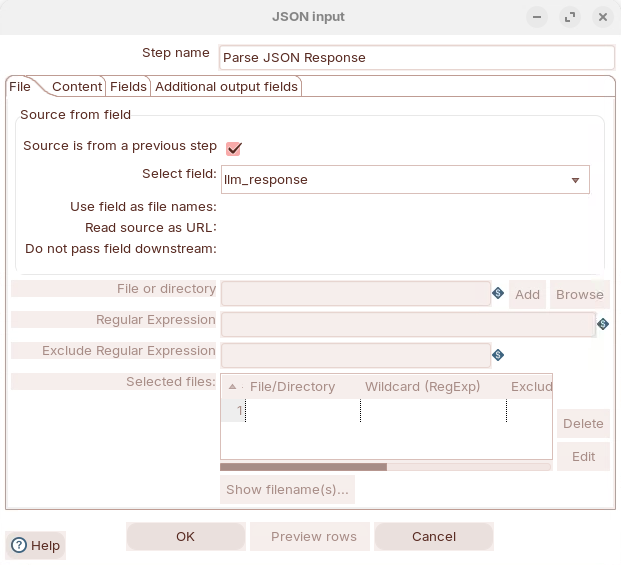

Parse the llm_response field

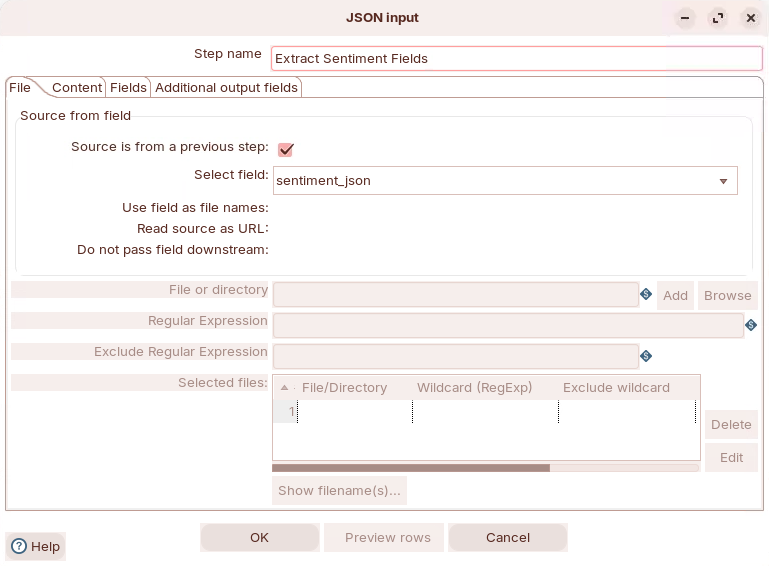

Extract sentiment fields

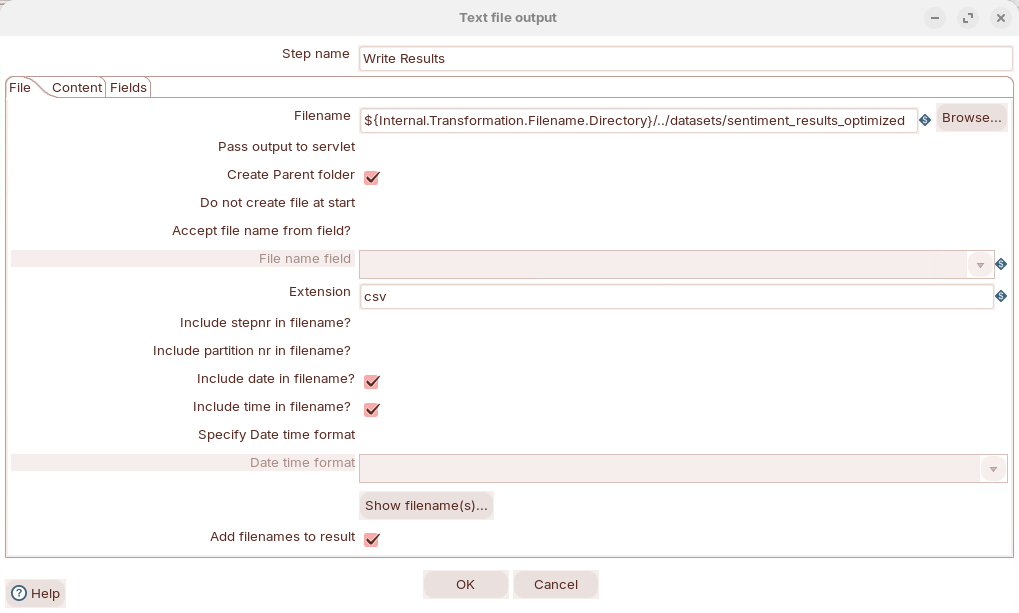

Output results

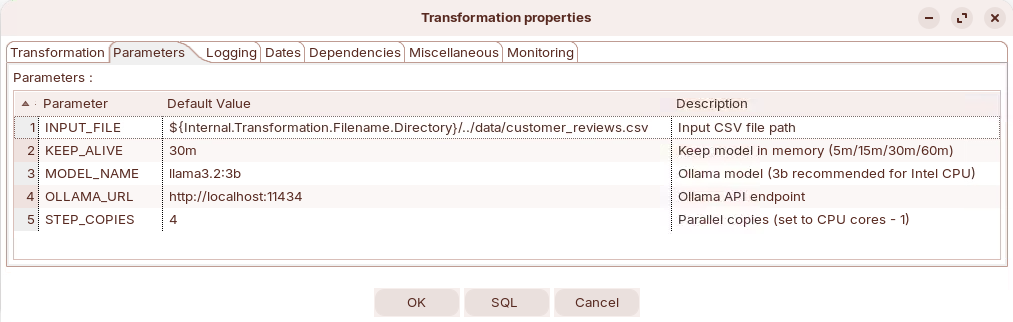

| Parameter | Default Value | Description |

|---|---|---|

| OLLAMA_URL | http://localhost:11434 | Ollama API endpoint |

| MODEL_NAME | llama3.2:3b | Model to use for analysis |

| INPUT_FILE | ../data/customer_reviews.csv | Input data path |

| Parameter | Default Value | Description |

|---|---|---|

| OLLAMA_URL | http://localhost:11434 | Ollama API endpoint |

| MODEL_NAME | llama3.2:3b | Model to use for analysis |

| INPUT_FILE | ../data/customer_reviews.csv | Input data path |

| KEEP_ALIVE | 30m | Keep model in memory (5m/15m/30m/60m) |

| STEP_COPIES | 4 | Parallel copies (set to CPU cores - 1) |

| Review | Customer | Product | Sentiment | Score | Confidence | Result |

|---|---|---|---|---|---|---|

| 1 | Sarah Johnson | Laptop Pro 15 | positive | 0.9 | 90% | ✅ Correct |

| 2 | Mike Chen | Wireless Mouse | negative | -0.6 | 80% | ✅ Correct |

| 3 | Emily Rodriguez | USB-C Hub | neutral | -0.33 | 70% | ✅ Correct |

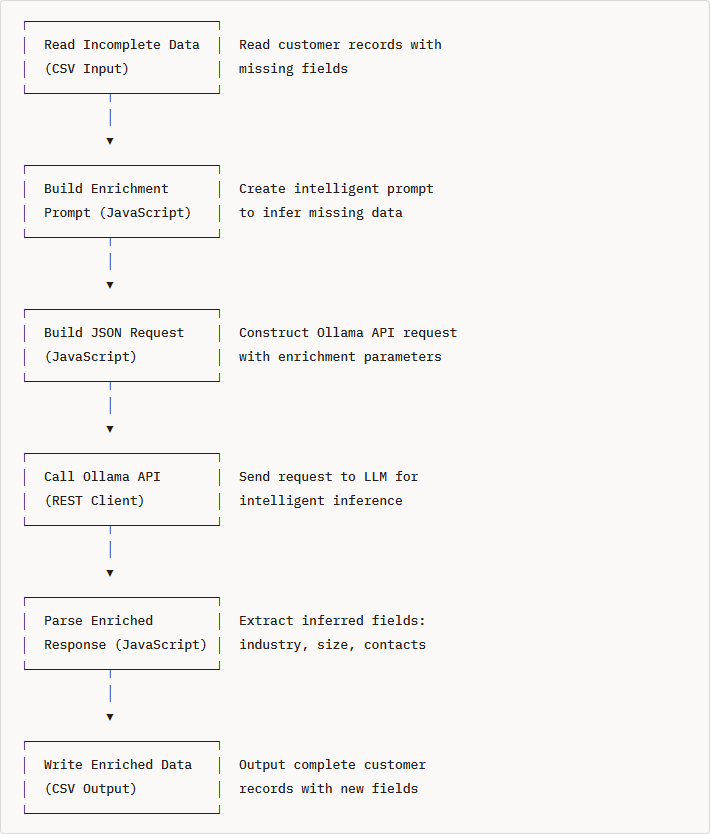

data_quailty_optimized

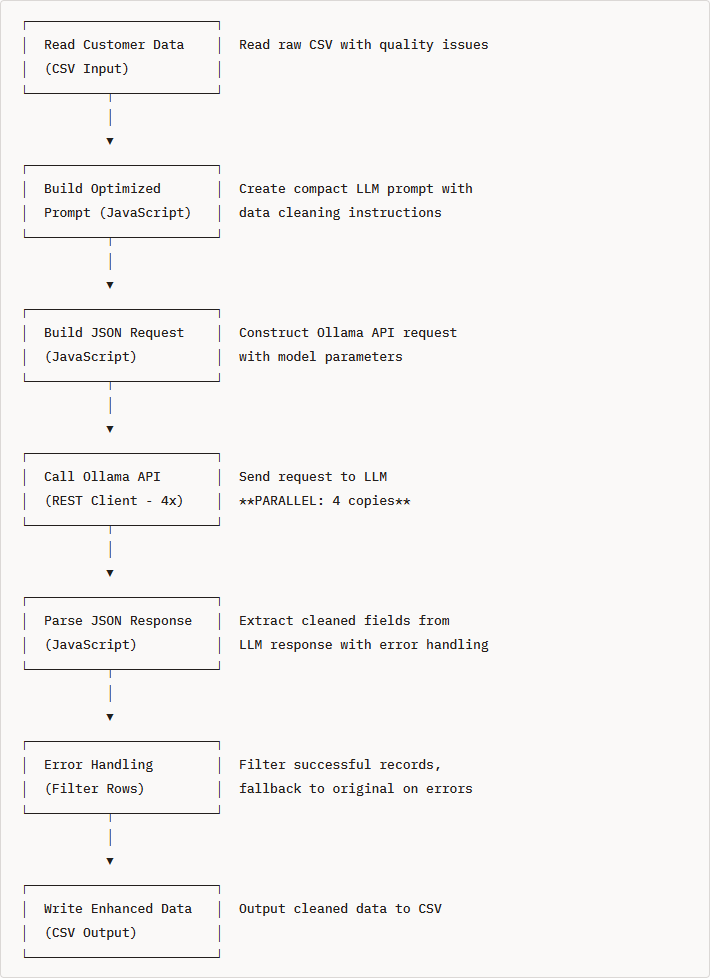

data_quality

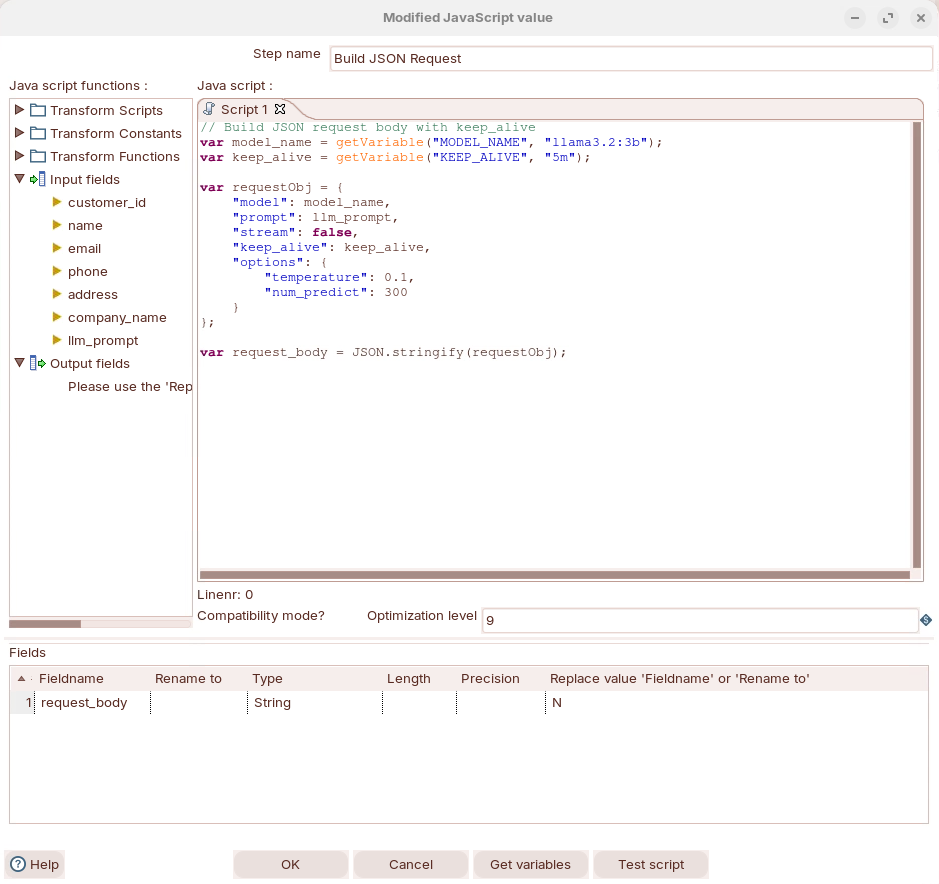

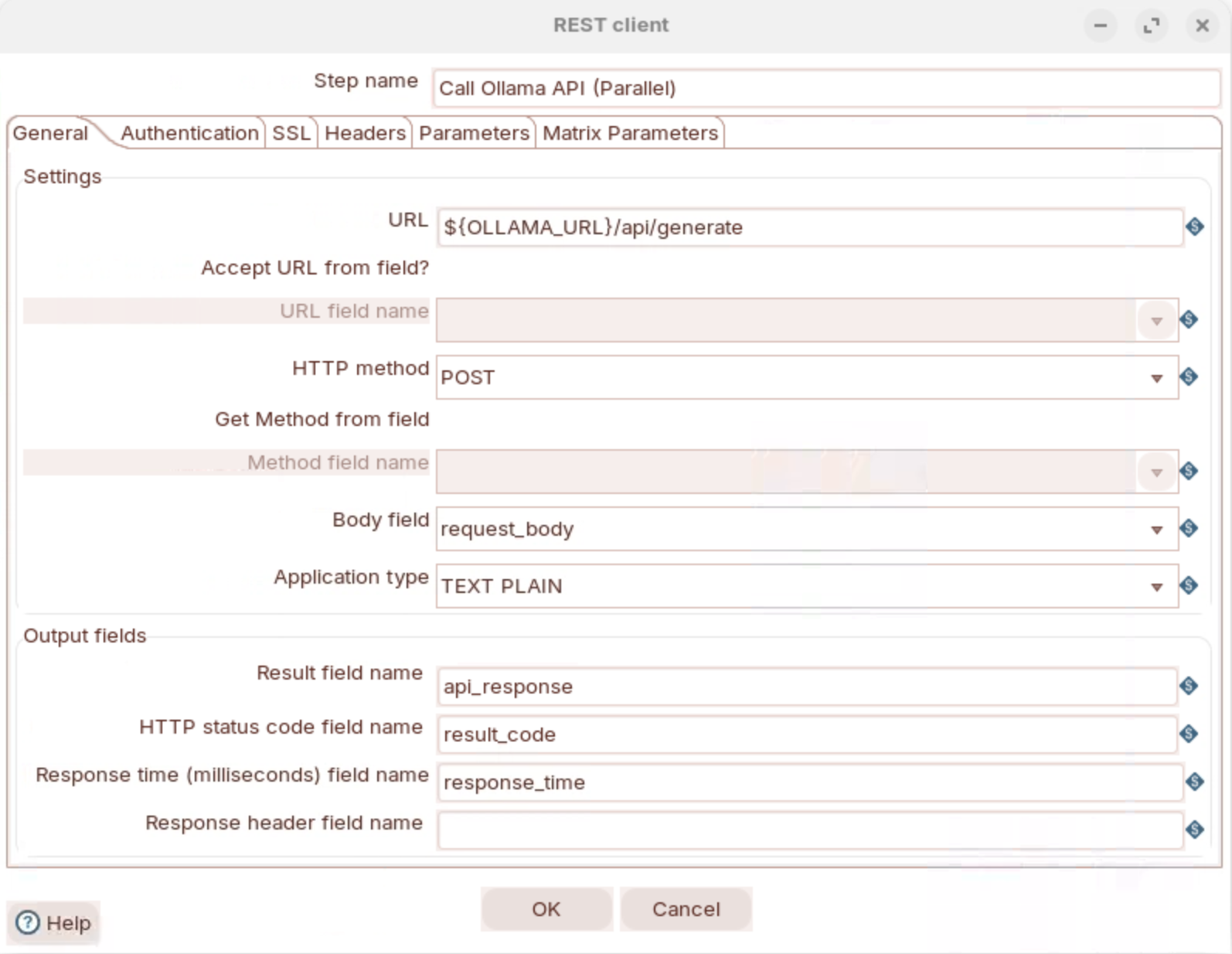

Build API request

Call Ollama API

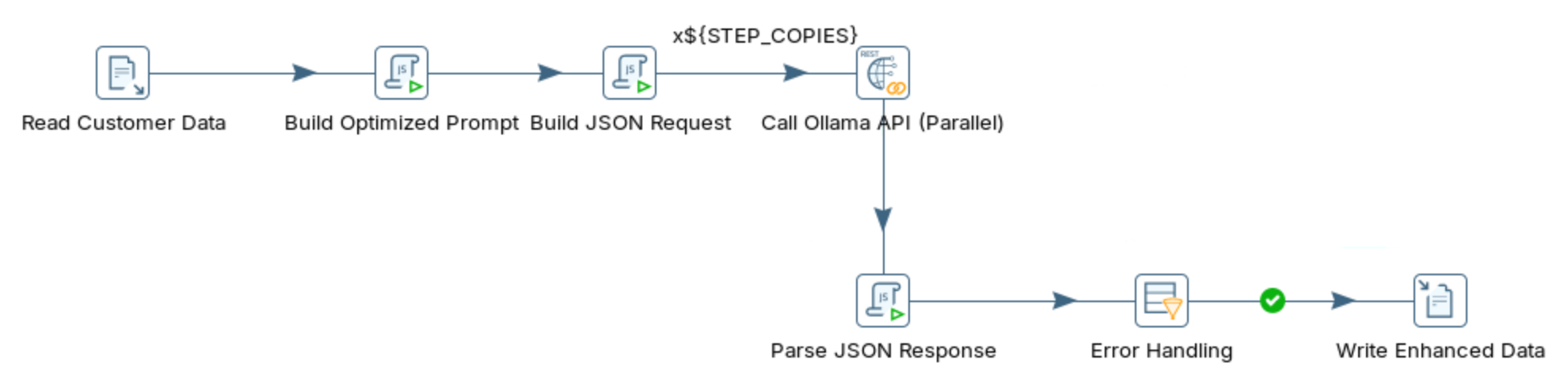

data_enrichment_optimized

| Field | Enrichment strategy | Example |

|---|---|---|

| Website | Infer from company name + domain patterns | acmecorp.com → www.acmecorp.com |

| Contact | Keep as UNKNOWN if not provided | John Smith or UNKNOWN |

| Phone | Infer area code from city/state | 555-1234 → +1-415-555-1234 (SF) |

| Address | Keep street or mark UNKNOWN | 123 Main St or UNKNOWN |

| City | Infer from state if missing | Texas → Houston (likely) |

| State | Infer from city or use 2-letter code | California → CA |

| Country | Default to USA if empty | USA |

| Industry (new) | Classify from company name/website | TechStart Inc → Technology |

| Employee Range (new) | Estimate from company name patterns | Acme Corp → 51-200 |

| Aspect | Data Quality (Workshop 2) | Data Enrichment (Workshop 3) |

|---|---|---|

| Input | Complete, messy data | Incomplete, clean data |

| Process | Standardize and validate | Infer and classify |

| Output | Clean existing fields | Add new fields |

| Risk | Low (validation) | Medium (inference accuracy) |

| Value-add | Consistency | New business intelligence |

data_quality

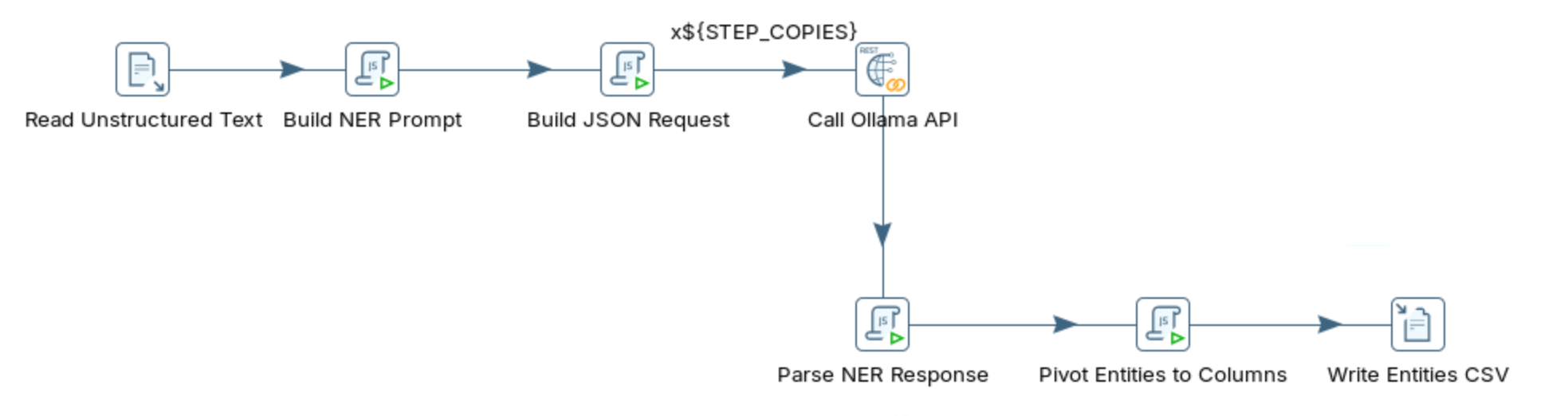

named_entity_recognition_optimized

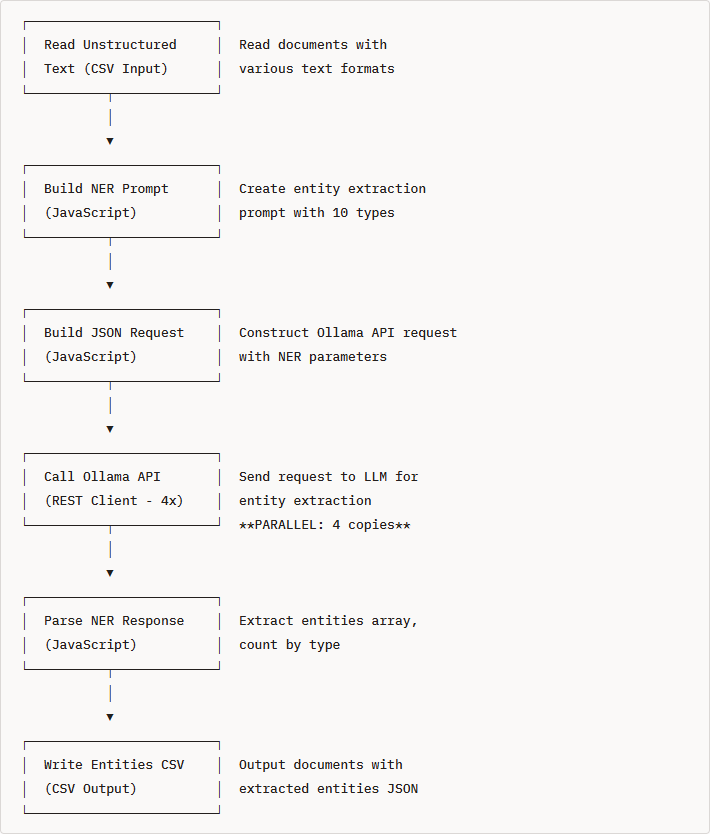

named_entity_recognition

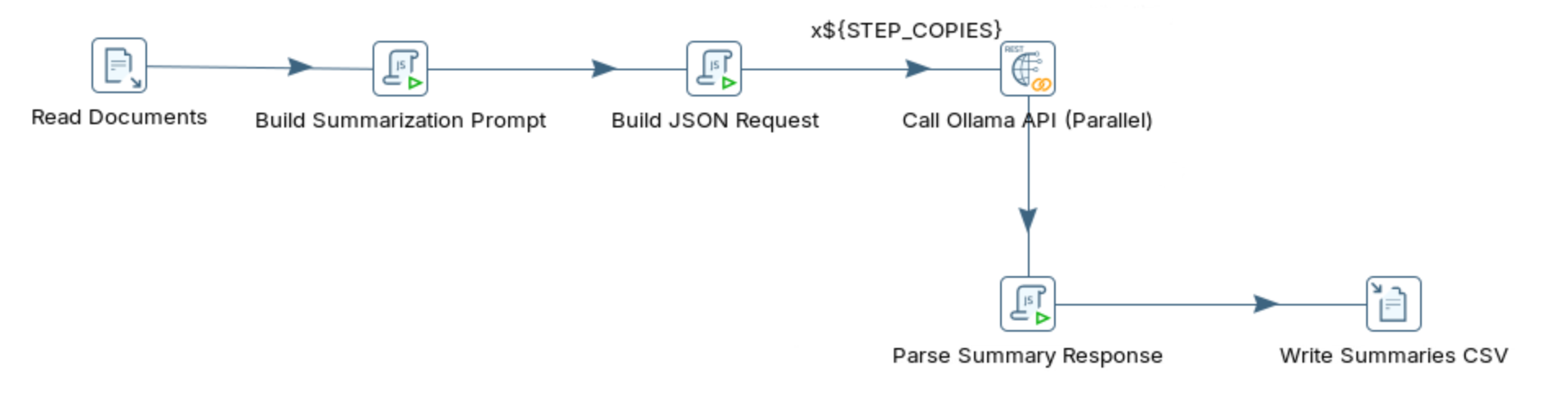

text_summarization_optimized

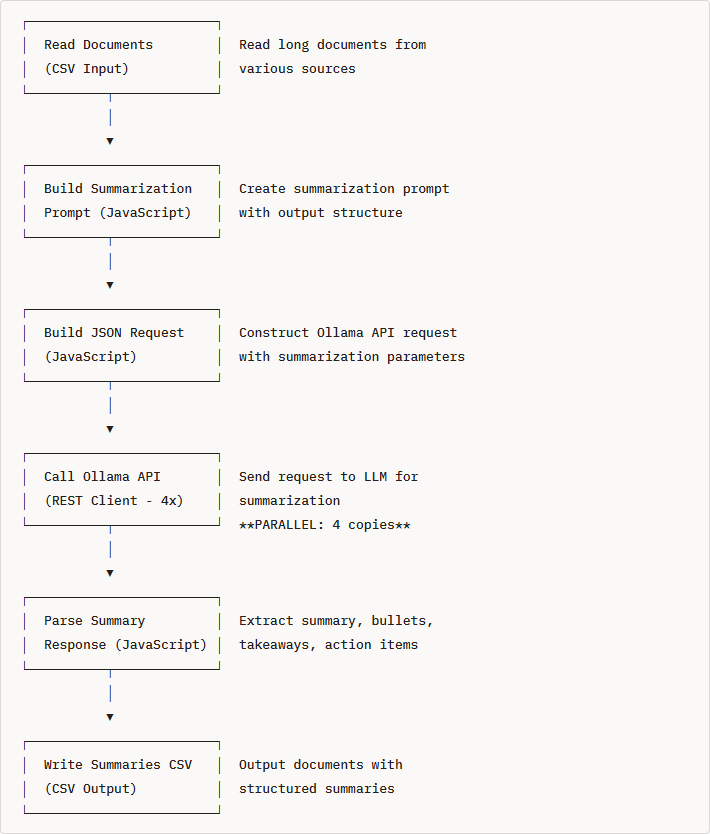

text_summarization

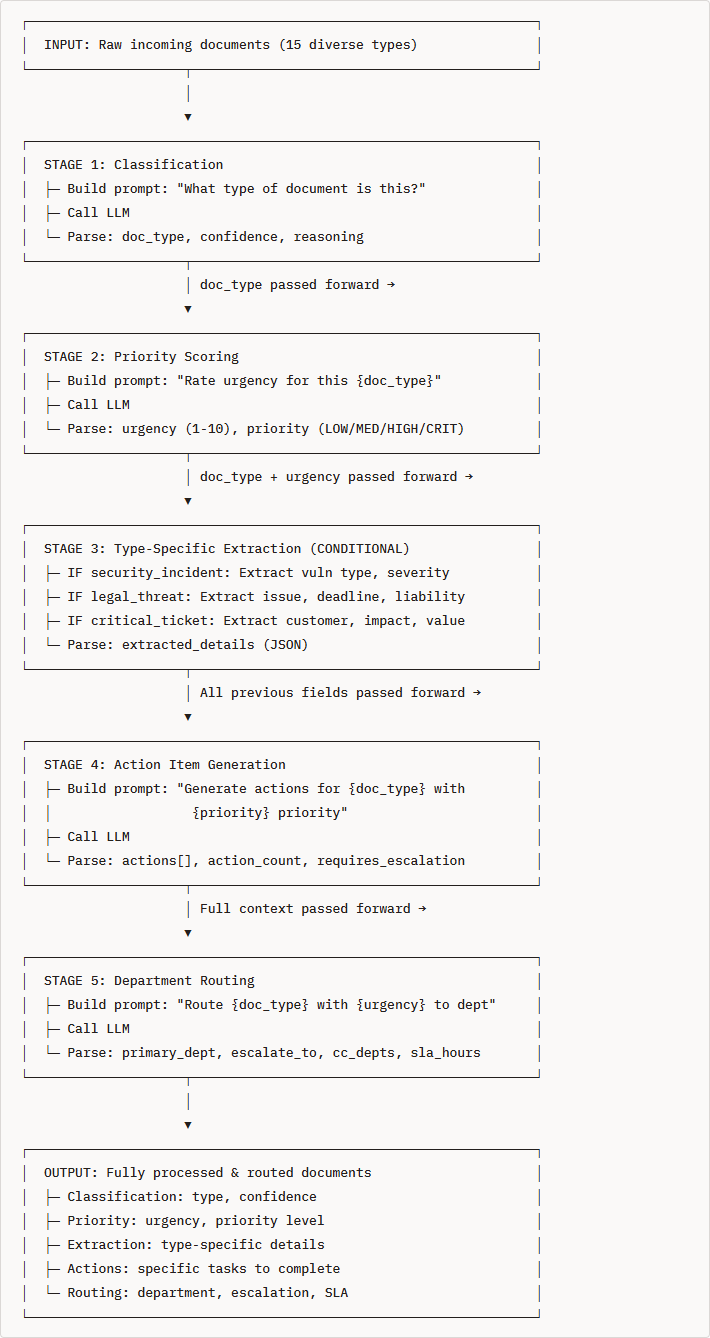

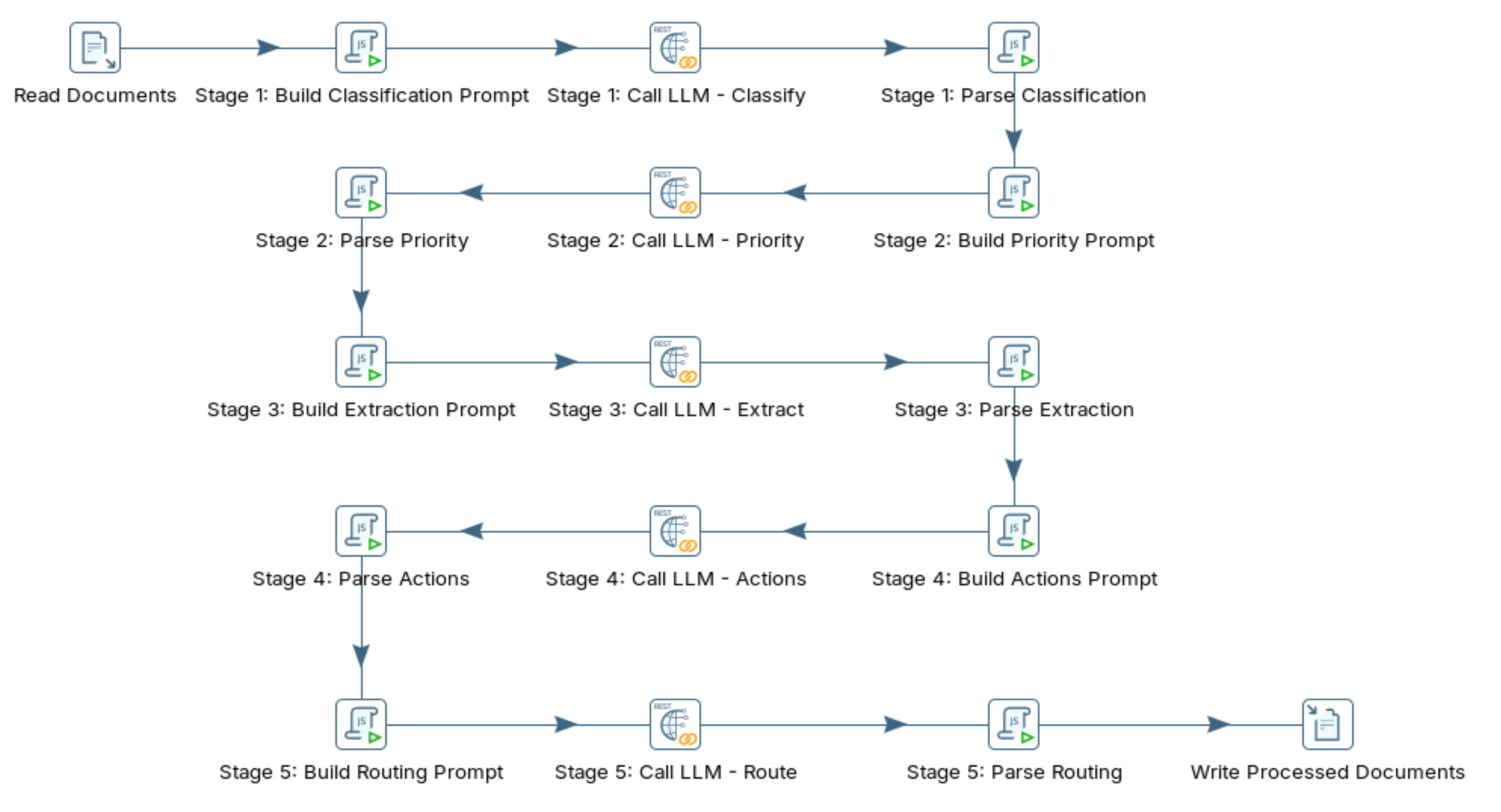

multi_staged_optimized

| Aspect | Single-Stage | Multi-Stage |

|---|---|---|

| Prompt Length | 200+ tokens | 40-80 tokens per stage |

| Accuracy | 65-75% (trying to do too much) | 85-95% (focused tasks) |

| Processing Time | 60-90 seconds | 50-70 seconds (5 calls @ 10-14s each) |

| Cost per Document | High (long prompt) | Lower (multiple short prompts) |

| Conditional Logic | ❌ Not possible | ✅ Full support |

| Error Handling | ❌ All-or-nothing | ✅ Per-stage recovery |

| Debugging | ❌ Hard to isolate issues | ✅ Know exactly which stage failed |

| Auditability | ❌ Black box decision | ✅ Track reasoning at each stage |

| Extensibility | ❌ Hard to add features | ✅ Easy to add new stages |

multi-staged